Last week, in a special livestream for members, I tested using the Apple Vision Pro developer strap to capture my perspective in real-time and share what it’s like to use spatial computing:

In this video, I’m testing how to capture, record, and stream using the Apple Vision Pro and Ecamm Live.

This method requires the Apple Developer strap, a USB-C cable (preferably extra long), and QuickTime for Mac, plus Ecamm Live and an Apple Vision Pro.

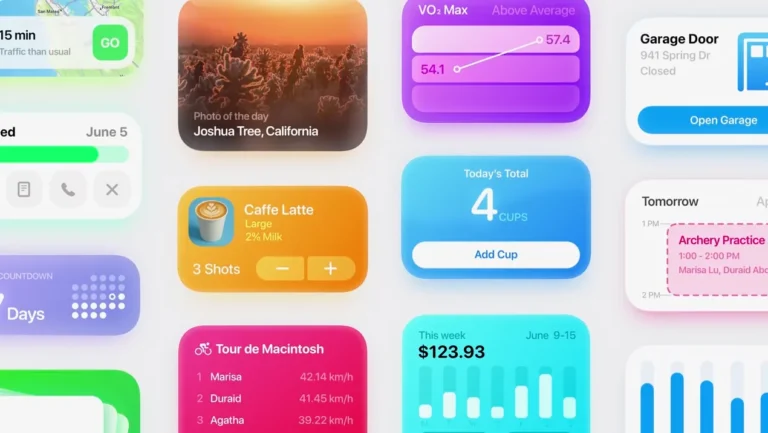

Become a member to get access to access to the video, plus:

- New shortcuts on an ongoing basis

- Extra ways to browse the catalog when you’re signed in

- Prerelease notes & workflows I’m putting together